What is Cross Validation?

Cross Validation is a technique in machine learning to assess the generalizability of a model. It involves partitioning the data into subsets, training the model on some subsets (training set) and testing it on the remaining subsets (validation set).

The goal is to validate the model's performance across different subsets of data, reducing the risk of overfitting and providing a more accurate measure of its performance on unseen data.

What is the difference between cross validation in statistics and cross validation in machine learning?

In both statistics and machine learning, cross-validation is used to assess model performance. However, the emphasis slightly varies. In statistics, cross-validation often focuses on the model's ability to predict new data accurately, emphasizing the understanding and interpretation of data patterns. In machine learning, cross-validation is more focused on the model's predictive performance and its ability to generalize to unseen data, with a stronger emphasis on optimizing and improving the model for practical applications.

What is k-fold cross validation?

K-fold cross-validation is a method where the data set is divided into 'k' number of subsets (or folds). The model is trained on 'k-1' folds and tested on the remaining one fold. This process is repeated 'k' times, with each fold serving as the test set once. The model's performance is then averaged over these 'k' trials. This method is beneficial because it ensures that every data point is used for both training and testing, providing a comprehensive assessment of the model's performance.

What is the leave-one-out cross-validation method?

Leave-one-out cross-validation (LOOCV) is a specific case of k-fold cross-validation where 'k' equals the number of data points in the dataset. In LOOCV, the model is trained on all data points except one and then tested on the excluded data point. This process is repeated for each data point in the dataset. LOOCV provides a thorough assessment of the model but can be computationally expensive for large datasets.

What are the most common cross-validation techniques using Python?

In Python, especially with libraries like scikit-learn, the most common cross-validation techniques include:

K-Fold Cross-Validation: Using KFold or StratifiedKFold for classification tasks.

Leave-One-Out (LOO): Using LeaveOneOut, particularly for small datasets.

Leave-P-Out (LPO): Using LeavePOut, where 'P' data points are left out in each iteration.

Repeated K-Fold: Using RepeatedKFold or RepeatedStratifiedKFold for multiple repetitions of K-Fold cross-validation.

Shuffle & Split: Using ShuffleSplit or StratifiedShuffleSplit for random permutations cross-validation.

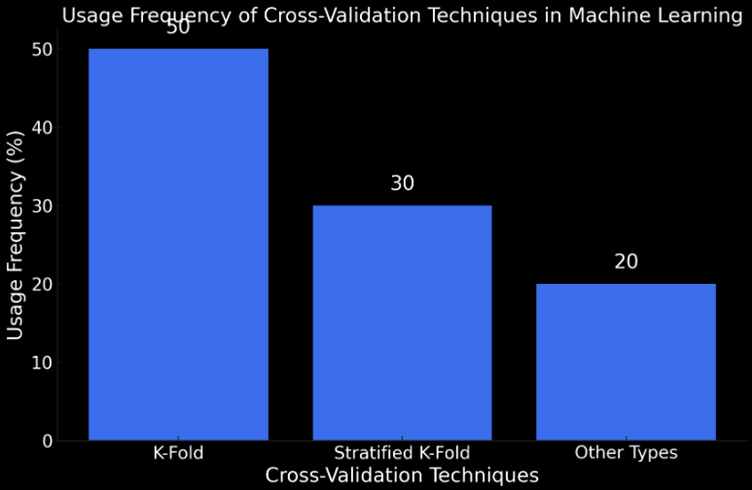

Interesting Data about Cross Validation

Here are some fascinating statistics and insights about Cross Validation:

Definition and Purpose: Cross-validation is a model validation technique for assessing how results of a statistical analysis will generalize to an independent dataset. It is a resampling method that uses different portions of data to test and train a model on different iterations, primarily used in predictive settings to estimate a model's performance in practice.

Process: A typical round of cross-validation involves partitioning data into complementary subsets (training and validation sets). Multiple rounds are often performed using different partitions, and the validation results are averaged over these rounds to provide a more accurate estimate of model prediction performance.

Types of Cross-Validation: Cross-validation can be categorized into exhaustive and non-exhaustive types. Exhaustive cross-validation includes methods that learn and test on all possible ways to divide the original sample into training and validation sets. In contrast, non-exhaustive methods do not compute all ways of splitting the original sample.

K-Fold Cross-Validation: In k-fold cross-validation, the data is partitioned into 'k' equal-sized subsets or folds. Each fold is used exactly once as the validation set while the remaining serve as the training set. This process is repeated 'k' times, and the results are averaged. An advantage of this method is that all observations are used for both training and validation.

Stratified K-Fold Cross-Validation: This variation ensures that each fold is a good representative of the whole by having approximately equal mean response values or the same proportion of class labels in case of binary classification.